Look at This: AI Dungeon

Look at This: AI Dungeon

AI Dungeon is an endless text adventure game with impressively open natural language interactions, implemented on a fine-tuned GPT-2.

Look at This: AI Dungeon

Look at This: AI Dungeon

AI Dungeon is an endless text adventure game with impressively open natural language interactions, implemented on a fine-tuned GPT-2.

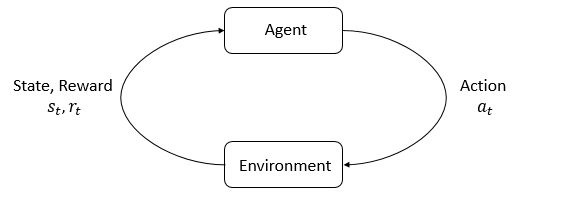

Deep RL Fundamentals #1: Elements of RL

Deep RL Fundamentals #1: Elements of RL

A breakdown of the various parts of the reinforcement learning problem and algorithms that solve them

Cox's Theorem: Establishing Probability Theory

Cox's Theorem: Establishing Probability Theory

Cox's theorem is the strongest argument for the use of standard probability theory. Here we examine the axioms to establish a firm foundation for the interpretation of probability theory as the unique extension of true-false logic to degrees of belief.

Comments on Eight Abstracts

Comments on Eight Abstracts

An unfocused sweep of eight abstracts from a very busy week in AI research: Emergent tool use, why hierarchical learning can work so well, brain-inspired hardware for artificial neural networks, pretraining and transfer learning for RL, chromatic network compression, semi-supervised reward shaping, WGAN model imitation for model-based RL, and navigation in turbulent flows!

Active Perception in Adversarial Scenarios

Active Perception in Adversarial Scenarios

Accumulating evidence about peers to discriminate potential threats.

Discovery of Useful Questions as Auxiliary Tasks

Discovery of Useful Questions as Auxiliary Tasks

Learning more like a human, and more like a scientist, by actively seeking useful auxiliary questions during learning.

Deep Reinforcement Learning without Catastrophic Forgetting

Deep Reinforcement Learning without Catastrophic Forgetting

Long-term learning of multiple tasks without forgetting old skills, using a new technique called Pseudo-Rehearsal.

Reward tampering

Reward tampering

Improving safety and control by preventing all manner of reward tampering by the agent itself.

DRL Not Superhuman on Atari

DRL Not Superhuman on Atari

DRL may not be superhuman on Atari after all, and how to avoid making mistakes like that in the future.

An introduction and statement of purpose for a series on the basics of deep reinforcement learning